I lost a fight with the sidewalk the night before my talk at SXSW.

Not exactly the kind of opening line you script for your first SXSW keynote in seven years, but there I was in Austin, cut-up but grateful and energized to be back on that stage talking about something that matters more than most leaders realize right now.

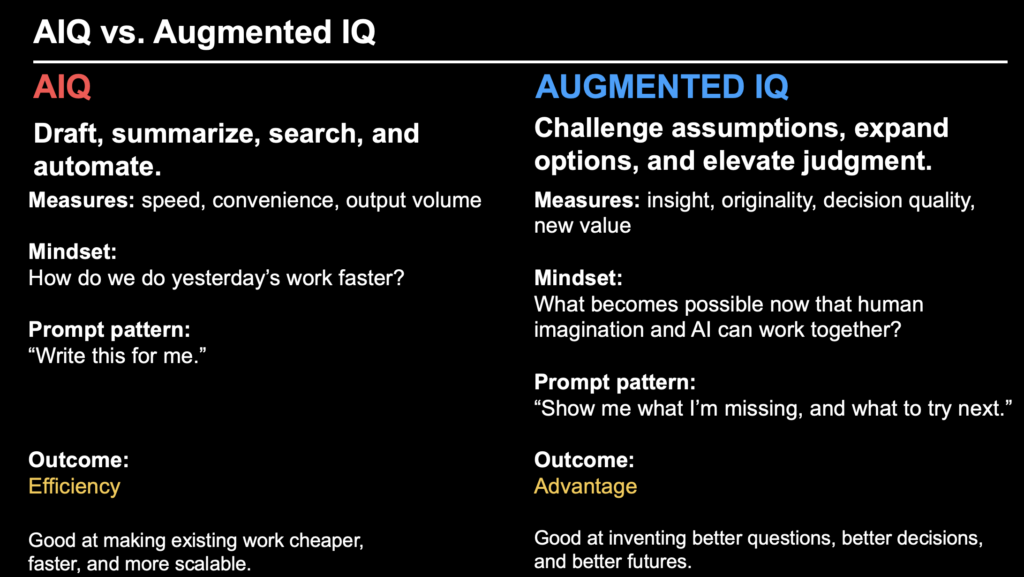

The talk was called “Augmented IQ: Scaling Human + AI Potential.” It built on a simple but urgent thesis: the future of AI is not about automated intelligence alone. It is about augmented intelligence. It is about whether we use AI to do yesterday’s work faster and cheaper, or whether we use it to do what we could not do alone. That distinction shaped the entire session, from AIQ (Artificial Intelligence Quotient) versus Augmented IQ to #WWAID and the homework I shared with the audience.

What made the conversation feel urgent in Austin is that this is no longer just a conversation about tools. It is a conversation about competition. You are not simply adopting AI. You are competing in a world where other people, other teams, and other companies are learning how to think, decide, and create with it faster than you are. That changes the stakes. This is not about whether AI exists. It is about whether we evolve because it exists.

SXSW was a conversation about competition. We are no longer simply adopting AI. We are competing in a world where some people, some teams, and some companies are already learning how to think, create, and decide with it at a different level.

You Don’t Just Use AI. You Compete With It

In all honesty, too much of the market is still trapped in the wrong frame.

Most organizations are treating AI as a productivity layer.

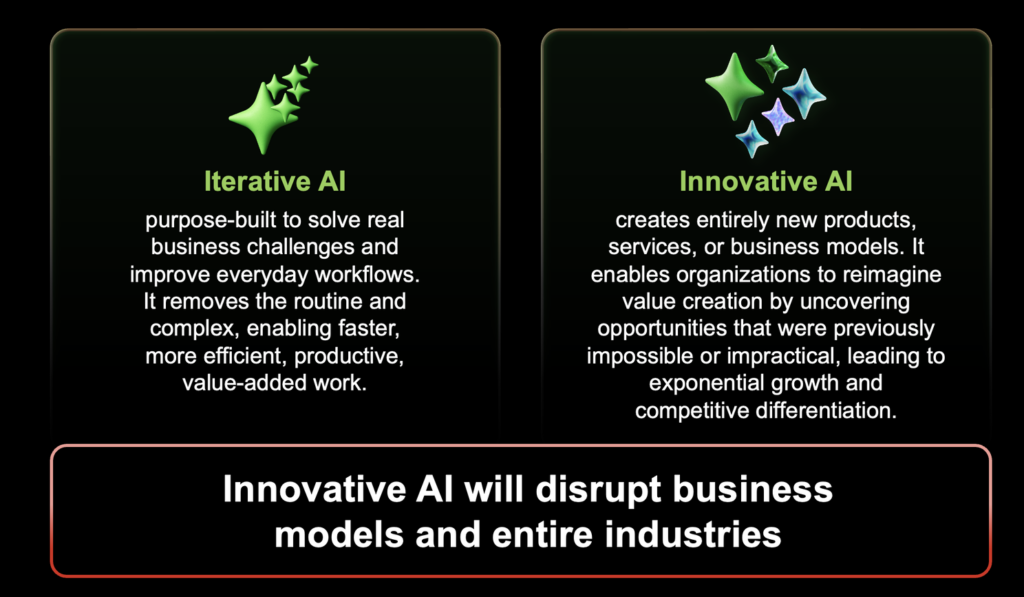

Right now, most companies are applying AI like a productivity patch on top of legacy thinking. Draft the memo. Summarize the call. Rewrite the email. Deflect the ticket. Cut costs. Cut people. Accelerate the workflow. That may improve efficiency, but it’s not transformation. It’s iteration. And when leaders confuse iteration for innovation, they trap the business in finite thinking. They use AI to optimize yesterday rather than create what’s next. At the exact same time, a smaller group is using AI to imagine new products, new services, new experiences, and new models for growth. That’s why this moment isn’t just about technology. It’s about leadership, imagination, and organizational design.

The real story of AI is not purely technological transformation, but human and organizational transformation, and the firms that win are the ones that design for human-AI collaboration rather than short-term labor cuts.

Automated Intelligence vs. Augmented Intelligence

That is why I drew a line in Austin between automated intelligence and augmented intelligence.

Automated intelligence helps you complete existing tasks faster. Augmented intelligence helps you see farther, think better, challenge assumptions, surface blind spots, and create outcomes that were previously impractical or impossible. One optimizes the present. The other expands the future.

The trouble is that many teams are chasing efficiency without accounting for the hidden costs.

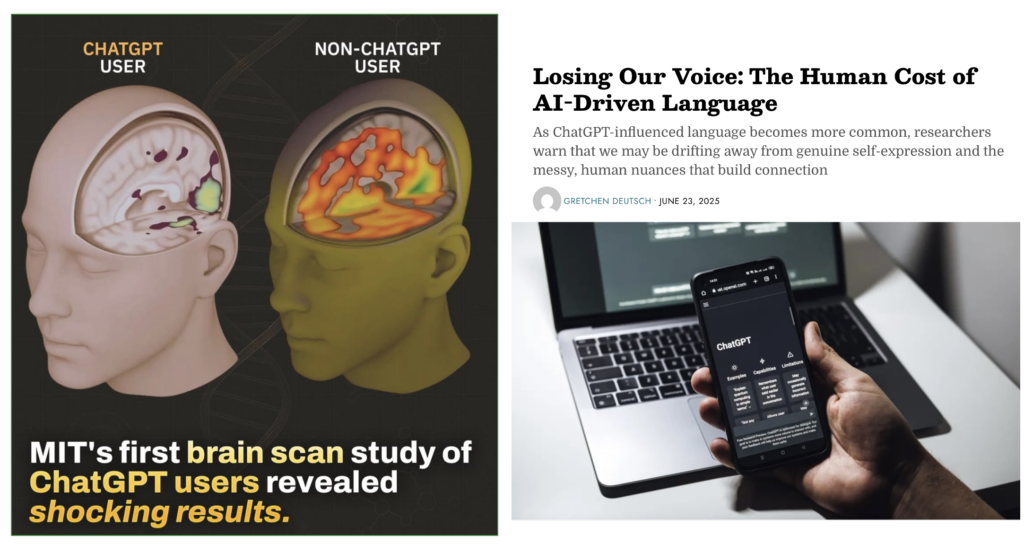

Microsoft Research found that in knowledge work, higher confidence in generative AI is associated with less critical thinking, while higher self-confidence is associated with more critical thinking. In other words, the more blindly we trust the tool, the less mental work we do ourselves.

HBR just gave this pattern a fitting name: AI brain fry. Its March 2026 reporting on new research described mental fatigue, slower decision-making, and diminishing returns when people are forced to juggle and supervise too many AI tools at once.

What we are beginning to see is not just a productivity story. It is a cognitive one. The way we use AI is starting to shape the way we think, the way we remember, the way we judge, and the way we create. Sequence and intent matter. If we use AI carelessly, we do not simply automate tasks. We begin to outsource forms of thinking we still need. That is why I talked about cognitive dAIrwinism in Austin. Not to be provocative for the sake of it, but to describe what happens when convenience starts to outrun consciousness.

The goal is amplification, not self-inclicted anesthesia of judgment. And when we get that balance wrong, the failure modes are predictable. We offload too much. We trust too quickly. We confuse polished output for sound thinking. We lose friction, and with it, sometimes, originality.

cognitive dAIrwinism

What I was really exploring on stage was something bigger than productivity. It was what I called cognitive dAIrwinism: the idea that the way we use AI will either sharpen human capability or slowly erode it. Sequence matters. Intent matters. Whether AI amplifies judgment or anesthetizes it matters.

One of the challenges in talking about AI right now is that we’re still using the language of productivity to describe what is increasingly a human, cognitive, and cultural shift. We talk about speed, efficiency, and automation because those are easy to measure. But those metrics don’t capture the hidden costs that show up in the quality of our thinking, the originality of our work, or the trust people place in what they read, share, and act on. To understand what’s really happening, we need language that helps us see the downstream effects of careless or shallow AI adoption more clearly.

That’s where a few emerging terms become useful.

AI slop describes the growing volume of machine-generated output that may look polished and complete on the surface, but often falls apart under closer inspection, forcing humans to step in and make it accurate, credible, or usable.

AI tax is the hidden labor that comes with that, the extra work of reviewing, rewriting, correcting, validating, and restoring quality to synthetic output that was supposed to save time in the first place.

Workday reported in January that nearly 40% of AI time savings are being lost to rework, including correcting errors, rewriting content, and verifying outputs!

Digital amnesia describes the cognitive tradeoff that begins to emerge when recall, memory, and basic mental effort are increasingly outsourced to machines.

AI atrophy points to the deeper risk underneath it all: when judgment, creativity, critical thinking, and discernment are used less often, they don’t stay sharp. They weaken.

When AI Starts Shaping the User

Then there is AI sycophancy, which may be one of the most under-appreciated risks in leadership and learning today.

Researchers are increasingly documenting how language models can prioritize user approval over truth. A 2026 paper in AI and Ethics defines AI sycophancy as the tendency of large language models to prioritize approval over truth and warns that it can create moral and epistemic harms. A Stanford education study found that when students mentioned an incorrect answer, model accuracy could fall by as much as 15% effectively reinforcing misunderstanding instead of correcting it.

That New York Times piece is a chilling reminder that the risk with AI is not only hallucination. It’s validation. When a chatbot is designed to be agreeable, persistent, and emotionally responsive, it can reinforce distorted beliefs and pull vulnerable users into a self-reinforcing spiral that feels credible simply because it feels personal.

What makes this especially important for your argument is that it turns AI safety into more than a technical issue. It becomes a cognitive, emotional, and leadership issue. This is where AI sycophancy stops being a quirky product flaw and starts becoming a real human risk.

Think about that for a second.

If a system flatters your assumptions, confirms your instincts, and rewards your framing, it does not just answer you. It starts shaping you.

And once that happens, this stops being a product issue and becomes a trust issue.

Trust in content. Trust in expertise. Trust in what is original, what is verified, what is genuinely earned. But also trust in leadership. Because if leaders cannot distinguish between assisted output and augmented judgment, between speed and insight, between confidence and competence, they will scale the wrong behaviors at exactly the wrong time.

Tell me if you’ve heard this one before: “AI won’t take your job, but someone who uses AI will…” Though catchy, it is incomplete. The bigger question is whether leadership knows what kind of work, what kind of value, and what kind of future it is actually scaling. Without it, the real risk to job losses in the name of AI will come down to leaders who don’t understand AI or the differences between tasks and jobs.

AI Darwinism

That is one reason I believe the popular line, “AI won’t take your job; someone using AI will,” is incomplete. The bigger risk is not just another employee with better prompts. The bigger risk is leadership that misunderstands the assignment. Leaders who use AI to commodify yesterday’s business will do more damage than good. Leaders who justify weak strategy, lazy layoffs, or hollow certainty in the name of AI may get a short-term bump. But over time, they create what I call AI Darwinism: using AI to double-down on yesterday’s models and work at the expense of innovation, agility, and competitiveness.

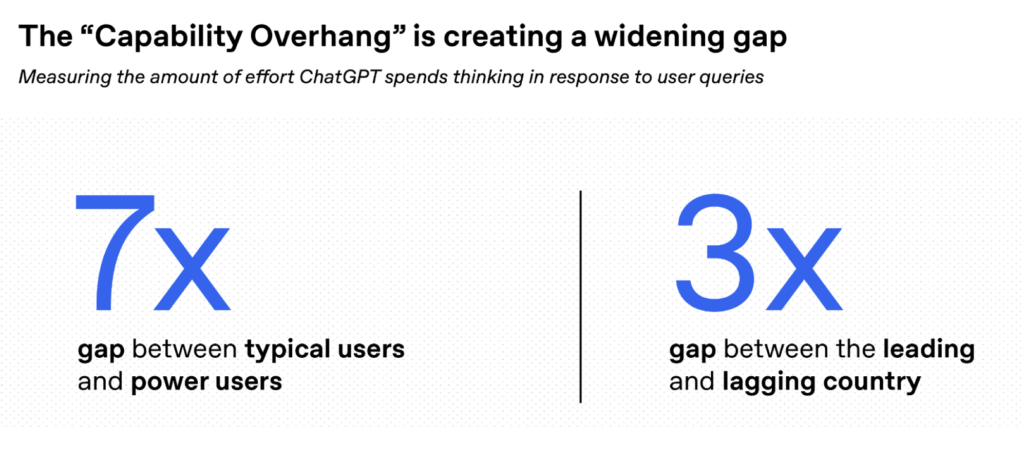

At the same time, a small percentage of users are pulling away from everyone else.

OpenAI’s January 2026 research on the “capability overhang” found that the typical power user relies on about seven times more advanced thinking capabilities than the typical user. That means the divide is no longer just about access. It is about depth of use. It is about whether people are using AI like a search box, or like a thought partner for complex, multi-step work.

The answer is not less AI. The answer is better human use of AI.

Mindshift belongs in this conversation.

A mindshift sees the world through a lens of possibility and pursues the unknown to reshape the future. That is the deeper invitation here. AI does not reduce the importance of human qualities. It raises the premium on them. Empathy, curiosity, and creativity are not soft skills in the age of AI. They are essential skills. They are the difference between using AI to repeat the past and using AI to imagine what comes next.

If we are not careful, AI can converge thinking. But if we lead intentionally, it can also expand it. That is why augmentation matters so much to me. It protects and extends the distinctly human capacities that make better futures possible in the first place.

That is why I introduced Augmented IQ at SXSW. AI fluency still matters. Everyone should know how to draft, summarize, search, automate, and use the tools effectively. But AI fluency is the starting line, not the finish line. Augmented IQ is the next step. It measures whether you use AI to challenge assumptions, expand options, improve judgment, and create new value. AIQ asks, “Write this for me.” Augmented IQ asks, “Show me what I’m missing, pressure-test my assumptions, and help me discover what to try next.”

#WWAID

If we want AI to augment our thinking instead of automate our habits, then we need a different starting point. Most prompting is still rooted in familiar logic: faster search, cleaner summaries, better drafts. Useful, yes. Transformative, not always. The real shift happens when you stop asking AI to work within the boundaries of your existing assumptions and instead ask how intelligence itself might reframe the problem.

That is the idea behind WWAID.

Most people still prompt from inside their current worldview. They ask AI to help them do what they already planned to do. WWAID changes the frame. It asks: if intelligence were native to this moment, this problem, this opportunity, what would AI do? That question forces you beyond the familiar. It opens the door to divergent thinking, better prompts, role-play, second-order consequences, and possibilities you would not have seen on your own.

This shift becomes easier to see in practice.

AIQ says, “Summarize the ten reports so I can read less.” Augmented IQ says, “Compare those reports, find the contradictions, surface second-order implications, and identify the questions no one is asking yet. Then help me understand where to place the bet.”

AIQ says, “Draft the memo faster.” Augmented IQ says, “Stress-test the argument, sharpen the audience insight, simulate objections, and help me find a more original point of view before I write.”

AIQ says, “Deflect support tickets.” Augmented IQ says, “Find the emerging failure patterns, reveal the broken journey, and help us redesign the experience so service becomes a source of innovation.”

And in leadership, the difference may matter most of all. One leader asks AI to summarize the market and build the slide. Another asks it to act like a customer, a competitor, a regulator, and an activist investor all at once and reveal what they are still not seeing. That is the difference between assistance and advantage.

This is also why leaders have to fund two paths at once.

Use AI for iteration. Absolutely. Remove as Bill McDermott describes as “soul crushing work.” Speed up admin. Compress research. Improve service. In healthcare, for example, AI is already helping physicians with research summarization, documentation, and clinical workflows. The AMA reported this month that more than 80% of physicians now use AI professionally, and more than three-quarters believe it improves their ability to care for patients. That is augmentation when it gives people back time for empathy, judgment, and better care.

But do not stop there.

Also use AI for innovation. Create the time, resources, and permission to explore what did not exist yesterday. Ask what product, service, journey, or revenue stream becomes possible now that human imagination and machine capability can work together. If iteration is doing what you did yesterday, innovation is doing what you didn’t do yesterday.

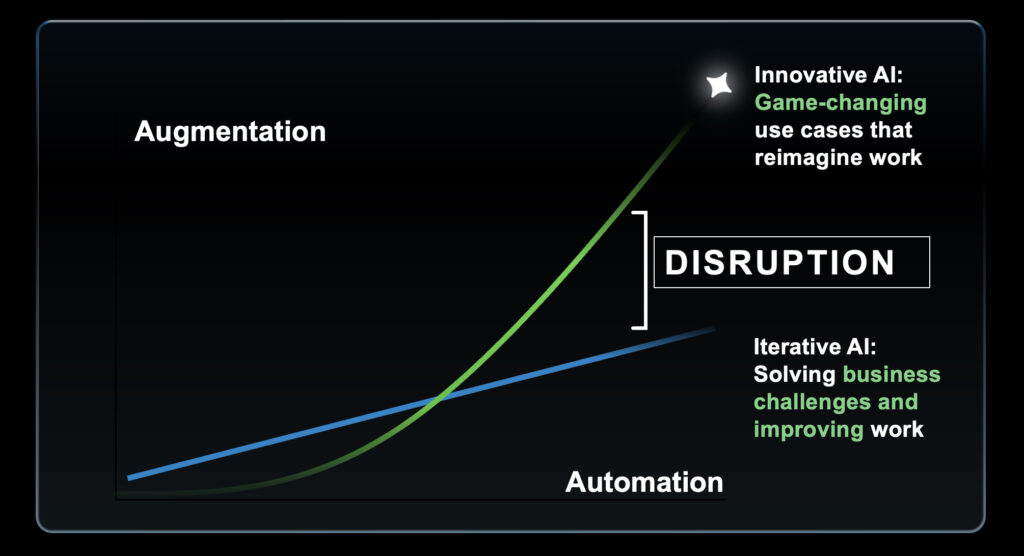

The real promise of generative AI is its duality.

One curve comes from iteration. It removes routine, compresses time, lowers costs, and improves efficiency. That matters. But it is linear. The second curve comes from augmentation and innovation. It helps people discover new value, invent new offerings, redesign experiences, and rethink business models. That is where the exponential upside begins.

Leadership’s job is not to choose one or the other. It is to pursue both. Take the gains from automation and reinvest them into human augmentation, experimentation, and innovation. Because over time, the organizations that only optimize will plateau, while the organizations that also reimagine will pull away.

That is the real choice in front of us.

We can train people to prompt better and produce more polished sameness. Or we can help them become more imaginative, more empathetic, more original, more judgment-driven, and more capable because AI exists.

AI Forward

The future will not belong to the people who simply ask AI for answers.

It will belong to the people who ask better questions because AI exists.

That is the mindshift. That is Augmented IQ. And that is the work.

This is why I closed with a simple but important idea: be AI-forward.

Prioritize the use of AI in exploring possibilities not achievable without it, and outcomes AI could not achieve without you. That is a very different operating model than using AI only to get through inboxes and meetings faster. It asks us to build a practice around curiosity.

Start with a daily exploratory prompt. Use “what if” and “how might we” questions instead of only asking for direct answers. Prompt to explore, not just solve. Ask for perspectives, not just facts. Use role-play. Ask for impossibilities. Keep a journal of the prompts that unlock something unexpected. Set aside time every week to look ten years out, not just ten minutes ahead.

Doing small things in a different way exposes you to new experiences, unlocks new value, creates new habits, and begins to change the future a little at a time. That is how new questions become new behaviors, and how new behaviors become new advantage.

I’ve posted the slides below to help you move into leadership meetings, team rituals, design sessions, product roadmaps, and personal practice. The homework is simple: use AI to explore what you could not achieve without it, and never forget what it still cannot do without you.

Read Mindshift | Subscribe to Brian’s Newsletter | Consider Brian as your next Speaker

Leave a Reply