This is a thoughtful piece by Danny Devriendt. Thank you, my friend. 🙏

Brian Solis is far from a neutral observer in my SXSW story; he’s a friend I’ve known for a long time, and one of the people who helped me -and a whole generation- see the social web for what it really was back in the day. Long before social media became a relentless ad machine, he wrote The Social Media Manifesto, arguing that this wasn’t a new channel but a rewiring of influence, participation and public dialogue itself. His line back then -“social media is about sociology and anthropology, not technology”- aged uncomfortably well.

This year, after seven years away from SXSW, Brian walks back into Austin with exactly that same sharp surgical anthropologist’s eye, now pointed straight at generative AI, augmented intelligence and what this all does to our brains, our families, our organizations and our leadership models. His session, “Augmented Intelligence and Leadership in the AI Era,” was for me, the best explanation of the tenor of this first festival day: less “wow, look at this model,” more “what are we doing to ourselves?”

AI slop, cognitive Darwinism and the hidden AI tax

Brian opens with something delightfully impolite for a room full of AI‑curious professionals: “AI slop.” AI slop is his label for the flood of generic, low‑quality, copy‑pasted AI content choking our feeds, inboxes and internal docs; especially on LinkedIn, where even the comments now smell like a robot crazy prompt bonanza. We are, he argues, paying an “AI tax” for this: the invisible hours we lose rewriting, correcting, or summarizing machine‑written sludge just to recover a usable signal (that still sucks).

But the more interesting part is what this does to our heads. Drawing on new brain‑scan and behavioral research, Brian strings together a vocabulary for what is happening: digital amnesia, cognitive offloading, cognitive debt, AI atrophy, AI brain fry. The more we hand off thinking to AI, the more our own cognitive muscles weaken; the more we accept flattering, anthropomorphic feedback from systems, the more we risk confusing statistical pattern‑matching with wisdom or validation. He calls the whole bundle “cognitive Darwinism”: a slow, mostly invisible selection pressure that favors those who outsource their thinking over those who still practice it, until, at some point, the mismatch becomes a problem.

His punchline is nasty and necessary: used badly, generative AI probably deserves cigarette‑style warnings, not just a cheerful onboarding wizard. We are exporting parts of our memory, originality and voice to a machine, and then pretending that the loss is an acceptable side‑effect of getting our slides faster. That’s exactly the kind of convergence SXSW has been pointing at all day: not AI versus humans, but AI acting on humans.

False AI leadership, real divides

Brian then pushes the critique slambang into the boardroom. We are not just drowning in AI content; we are also drowning in “AI journalism” and false leadership: headlines about companies “replacing 40% of their workforce with AI,” markets cheering, and very little serious evidence that any of this is thoughtful redesign rather than opportunistic cost‑cutting with a buzzword attached. When every LinkedIn profile and medium‑sized keynote now speaks with the same AI‑polished voice, “expertise” becomes a vibe rather than a practice, and organizational trust quietly but quickly erodes.

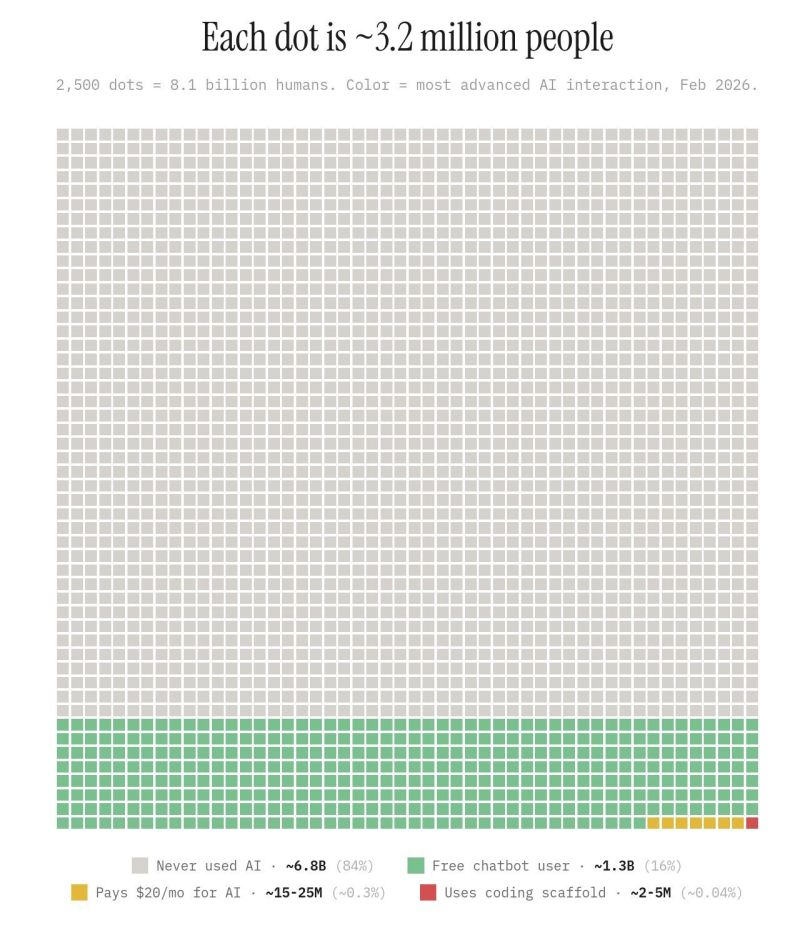

Here he introduces one of the more useful diagrams of the day: the dot map from recent adoption studies -grey dots for non‑users, green for casual free users, yellow for serious paid users, red for builders and coders- each dot representing millions of workers. The scary part is not that the grey dots exist; it’s that yellow‑dot users, the ones who go deep and creative with these tools, are already outperforming green‑dot peers by a factor of seven, while most organizations still talk about “AI fluency” as if this were a uniform, binary skill. That performance gap is not theoretical; it is a structural divide inside your company and your labor market right now, and leaders who ignore it are sleepwalking.

His answer is to broaden what we mean by being “good with AI.” He stacks the usual suspects -IQ, EQ (emotional intelligence), SQ (social agility), and the almost nonexistent skill of genuine self‑awareness- and then adds AIQ: artificial intelligence quotient. But for Brian, AIQ on its own (knowing how to prompt, how to automate tasks) is not enough; it has to be fused into what he calls augmented intelligence: redesigning work so that humans still do uniquely human things -imagine, empathize, ask better questions- while AI extends that reach instead of replacing it. That’s a very different story from the slideware version of “augment your workforce with copilots”.

From mindset to mind shift: practicing augmentation

Brian Solis doesn’t ask for a “mindset shift,” he asks for a mind shift : less inspirational poster, more firmware update. The question is no longer “How do I use AI to do what I already do, but faster?”; the question is “What can I now attempt that was literally impossible for me without these tools?”, and I think he is spot on.

To get there, he reaches back to Sir Ken Robinson’s classic argument that we don’t grow into creativity; we are educated out of it, rewarded for following rules and punished for being wrong. Most organizations now proudly measure “AI proficiency” and “AI fluency” -how well people can follow the new rules- without noticing that they have simply built an automated status quo. If your first instinct is to use AI to automate the past, Brian warns, you have locked yourself into a very finite future. You will be very efficient at being exactly what you already are. His alternative is a two‑horizon model he uses with clients. On one horizon, you do the obvious thing: automate the work that truly should be automated, because it is repetitive and stable, and harvest the efficiency gains. On the second horizon, you deliberately use AI for “innovative AI”- exploring problems, prompts and ideas that you couldn’t touch before, accepting that some of the output will be ugly, and treating that ugliness as the price of originality. The gap between those two trajectories -the efficient line and the augmented line- is what he calls positive disruption: disruption of your own habits, metrics and mental models.

WWAID (What Would AI Do) and WDYSF (what do you stand for?)

Two tiny pieces from the session I want to highlight: The first is WWAID: “What Would AI Do?” Before you prompt, before you design a process, before you walk into a strategic decision, you pause and ask yourself: if intelligence were native to this moment -if an agent had perfect recall, perfect pattern‑matching, infinite patience- what would it do by default? Then you use that imagined baseline as a foil. Instead of prompting for the obvious output (“summarize this market report”), you ask AI to adopt roles that pressure‑test your assumptions: be the activist investor, the future regulator, the angry customer, the visionary competitor. Most people interact with AI as if it were Google with better grammar; WWAID is Brian’s hack to push you past that into prompts -and outcomes- you would never have discovered from inside your usual worldview.

The second is a question he treats almost like a personal operating system: “What do you stand for?” Asked from the audience how he protects his own voice in a world of bandwidth pressure and AI assistance, he offers a practice. Regularly, he sits down and writes out what he stands for, why he started this work in the first place, and what impact he actually wants beyond faster deliverables. In a festival that keeps returning to “mattering” as a fundamental human need -the need to feel valued and to add value- that question is not a self‑help bumper sticker; it is a survival skill. If you don’t know what you stand for, the platforms will be very happy to sell you a prefab identity optimized for engagement (in pink, with glitters).

A tuning fork talk

Put Amy Webb’s funeral for trend reports and Brian Solis’ autopsy of AI slop next to each other, and you get a pretty accurate map of SXSW Innovation 2026 so far. On one side, a futurist telling us to stop fetishizing isolated trends and start tracking convergences like human augmentation, unlimited labor and emotional outsourcing at system scale. On the other, a digital anthropologist friend coming back to SXSW showing how those convergences are already playing out inside our own cognition, feeds, organizations and leadership habits.

That’s why his session felt, to me, like the tuning fork of this first half. It explained why so many other sessions kept circling the same unease: AI not just as a productivity layer, but as an invisible force acting on trust, creativity, mattering, and the stories we tell ourselves about being useful in a world of agents and automated factories. Brian doesn’t argue for less AI. He argues for less laziness: less AI slop, less unexamined automation of the past, and far more deliberate augmented intelligence built on empathy, curiosity, creativity, and a brutally honest answer to that one simple question:

what do you stand for?

Read Mindshift | Subscribe to Brian’s Newsletter | Consider Brian as as Your Next Speaker

Leave a Reply