Based on interviews in May 2025 and subsequent, more detailed,, and repeated warnings in 2026, Anthropic CEO Dario Amodei has predicted that artificial intelligence could wipe out roughly 50% of all entry-level white-collar jobs within the next one to five years.

Amodei is sounding the alarm to get our attention.

If he’s right, leaders don’t get the luxury of debating whether disruption is coming, they’ll have to manage how it lands. Amodei’s advice is to track track where AI is automating work in near real time, redesign roles and workflows so AI creates capacity for new value instead of just headcount reduction, and build redeployment + reskilling paths so people move into the next work, not out of the company. His message is to treat AI like an operating-model change, and act now while you still have time to shape outcomes.

Disruption is inevitable. Leadership, vision, and strategy is a choice.

Otherwise, the AI jobs debate will stay stuck on the binary question: “Will AI take jobs?”

The problem with that question is that it invites hot takes and math inspired by doomscrolling headlines, when what we actually need is proper instrumentation: where AI is showing up, how it’s being used (automation vs. augmentation), and when impacts show up in real labor data.

Challenging the AI Jobs Narrative

If you ask Jack Dorsey, his answer would be, “Yes, in fact, AI just took 4,000 jobs at Block.”

In a post on X by @Jack, Block cut roughly 4,000 roles (about 40% of a ~10,000-person workforce), with Dorsey framing it as an AI-driven productivity/efficiency shift. This narrative fueled an immediate debate about “AI-washing” vs. real operating leverage.

But then you have to follow up, “really, Jack!? Did AI really allow you to cut 40% of your workforce or was that just a great narrative to drive up shareholder value?”

Anthropic’s new labor-market report helps us great a clearer picture of Where is AI actually showing up in work today, and how fast is the gap closing between potential and practice.

The Gap Between AI Capability and Real-World Use

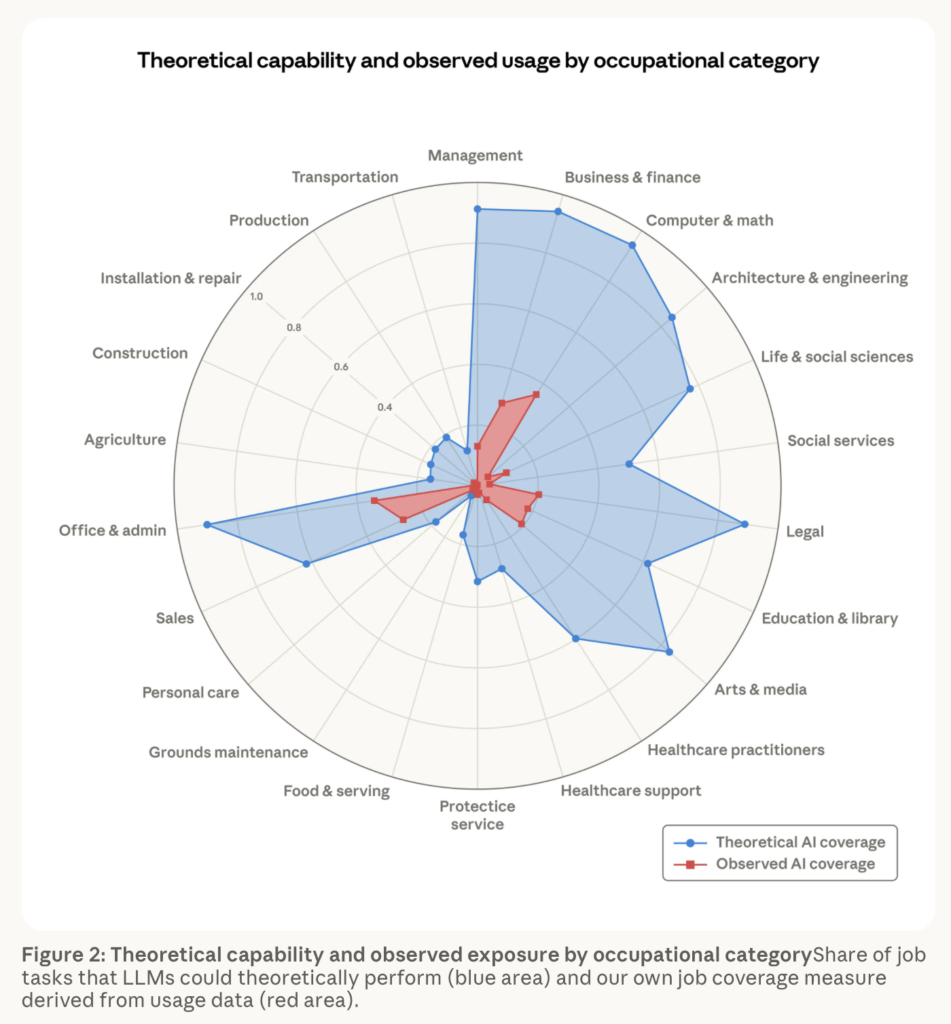

For example, Figure 2 (below) challenges the “AI is taking jobs” narrative, at least today. At first glance, it represents AI’s theoretical capability against current usage organized by occupational category. What we’re basically looking at is AI’s practical reach is still much smaller than its theoretical reach.

This is exactly why headlines, and now boardroom conversations, are out of sync with the idea of AI vs. its observed reality. Leaders need a repeatable, trustworthy way to separate signal from story, especially when labor impacts can be ambiguous for years and only obvious in hindsight.

Without doing so, leaders believe the headlines and spark the onset of a self-fulfilling prophecy.

In its report, Anthropic introduces “observed exposure,” a measure of displacement risk that blends theoretical LLM capability with real-world Claude usage, and it weights automated (vs. augmentative) and work-related use more heavily.

The report argues we are still in the “workflow adoption” phase, not the “widespread labor replacement” phase. That does not mean the risk is fake. It means the strongest evidence today points to uneven deployment and early hiring effects, not a wave of economy-wide job loss.

Does this mean we’re in the clear? Not so fast…

For now, AI can do more in theory than businesses are actually using it for today.

But what Anthropic does see is an early, weaker signal in hiring, especially for younger workers trying to enter some exposed white-collar jobs. This means that AI is starting to reshape work and hiring, but it does not yet show up as broad job destruction in the labor market data they studied.

According to Anthropic, A job’s exposure is higher if:

- Its tasks are theoretically possible with AI

- Its tasks see significant usage in the Anthropic Economic Index5

- Its tasks are performed in work-related contexts

- It has a relatively higher share of automated use patterns or API implementation

- Its AI-impacted tasks make up a larger share of the overall role

The weighting matters: fully automated implementations get full weight, augmentative use gets half weight, because the labor-market risk isn’t “AI helped,” it’s “AI did.”

In other words: not what AI could do, but what it’s already being used to do at work.

And users are already self-selecting into what LLMs do best: 97% of tasks observed in the Economic Index fall into categories rated theoretically feasible (β=0.5 or 1.0). Even more telling: tasks rated fully feasible (β=1) make up 68% of observed usage; tasks rated not feasible (β=0) are just 3%.

Well, there’s a gap, and that’s the real story.

That gap is also the transformation window, where leadership decisions (governance, incentives, redesign, risk, compliance, determine whether AI stays a co-pilot or becomes a pilot (and infrastructure).

In “Computer & Math” roles, they estimate 94% of tasks are theoretically feasible for LLMs, but Claude’s current coverage is only 33%. Capability is racing ahead. Adoption and deployment depth are not.

This is the part most narratives miss: capability headlines don’t mean much; deployment depth is where organizations either replatform work, or don’t.

Where does the impact concentrate first?

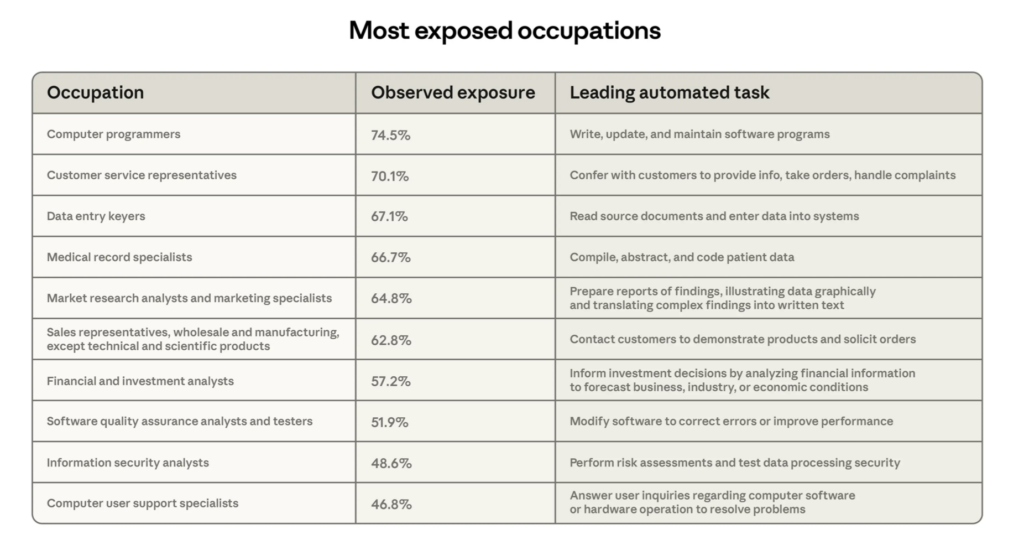

The “most exposed” list reads like a roadmap of early automation:

- Computer Programmers: 75% coverage | Leading Automating Task: Write, update, and maintain software programs

- Customer Service Representatives: 70% | Leading Automating Task: Confer with customers to provide info, take orders, handle complaints

- Data Entry Keyers: 67% | Leading Automating Task: Read source documents and enter data into systems

- Medical Records Specialists: 66% | Leading Automating Task: Compile, abstract, and code patient data

- Market research analysts and marketing specialists: 65% | Leading Automating Task: Prepare reports of findings, illustrating data graphically and translating complex findings into written text

- Sales representatives, wholesale and manufacturing, except technical and scientific product: 63% | Leading Automating Task: Contact customers to demonstrate products and solicit orders

- Financial and investment analysts: 57% | Leading Automating Task: Inform investment decisions by analyzing financial information to forecast business, industry, or economic conditions

- Software quality assurance analysts and testers: 52% | Leading Automating Task: Modify software to correct errors or improve performance

- Information security analysts: 49% | Leading Automating Task: Perform risk assessments and test data processing security

- Computer user support specialists: 47% | Leading Automating Task: Answer user inquiries regarding computer software or hardware operation to resolve problems

At the other end, 30% of workers show zero coverage in their dataset—jobs where tasks don’t show up enough in usage to clear their threshold. That alone should temper the most breathless headlines.

I want to point out an important nuance, “zero coverage” isn’t “zero exposure forever.” Anthropic notes this is often a measurement boundary, tasks appeared too infrequently in their sample to meet the minimum threshold. Said another way, AI impact won’t be universal or simultaneous. It will be uneven, threshold-based, and shaped by where work becomes digitized enough to be absorbed into workflows.

Where AI Is Hitting First

Now for the part leaders should not ignore: the entry-level signal.

Anthropic does not find broad unemployment effects yet. What it does find though is an early shift in hiring, especially for younger workers. Beginning in 2024, workers ages 22–25 pursuing highly exposed occupations show an estimated 14% decline in job-finding rates versus 2022. The evidence is modest, but it may be an early signal of where disruption appears first.

Two extra data points that sharpen this: job-finding rates in less exposed occupations stay around 2% per month, while entry into the most exposed jobs drops by about half a percentage point. And they note this pattern does not show up for workers older than 25.

They also offer plausible alternative interpretations (not just leaving things at “AI did it”): entrants could be staying put, switching to different occupations, or returning to school.

This is how disruption often begins, however. It doesn’t start with mass layoffs, but with quieter signals that compound over time.

If the first rung of the career ladder changes, everything above it eventually changes too.

Unemployment is a lagging indicator. The early signal is often career access, who gets the job, who gets trained, who gets mentored, and who gets filtered out before they ever count in the data.

Also worth noting: the “most exposed” group skews more female (+16 pp), earns 47% more on average, and is far more credentialed, graduate degrees are 17.4% vs. 4.5% in the unexposed group. This isn’t only a story about “low-skill” work.

This is primarily a white-collar, highly educated workforce story, which means leaders need to think beyond hourly labor and focus on how professional roles, knowledge work, and entry-level career paths are being redesigned vs. how they should be designed.

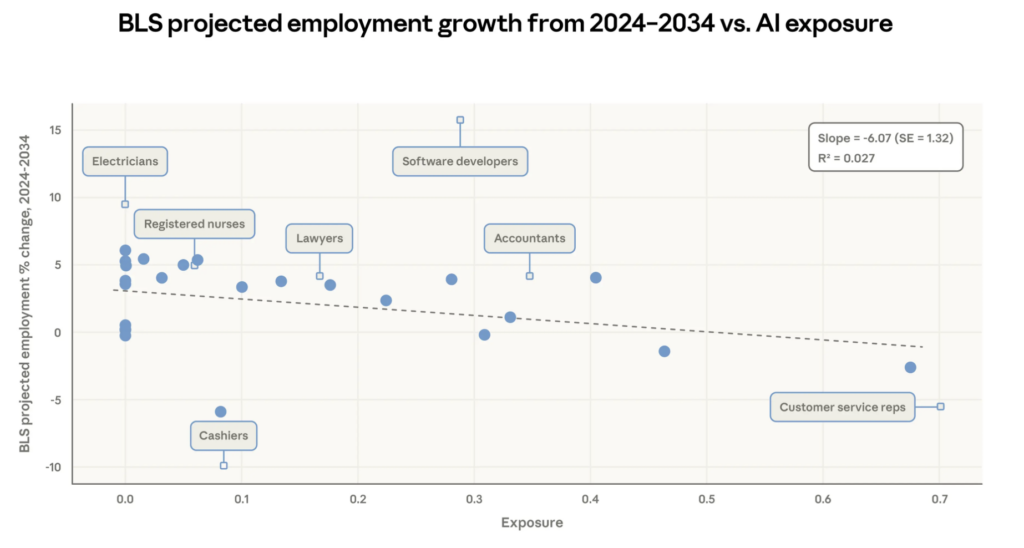

One more stat that supports measurement over myth: Anthropic compares exposure to the US Bureau of Labor Statistics (BLS) projections (2024–2034) and found a slight relationship, every +10 percentage points of coverage corresponds to about a 0.6 percentage point lower projected growth. Said another way, AI exposure appears to be showing up most in occupations that already look like they’ll grow less. This suggests observed exposure can function like an early-warning device for where job redesign and hiring friction might appear first.

My takeaway: AI isn’t just improving productivity. It’s changing the unit of work. And once the unit of work changes, everything downstream changes: org design, hiring, training, compensation, and career paths.

And impacts may not be linear. The report explicitly raises the idea that some jobs behave like “O-ring” systems, where you don’t see real employment effects until most tasks have AI penetration. That’s another reason the “% exposed” debate is misleading. The “O-ring” idea points to a process that can be limited by its weakest link. If one part still needs a human, you may not see big labor impact until most parts are AI-capable and integrated.

The real question is, when will AI cross the tipping point where the workflow can be redesigned around it?

Anthropic also stress-tested the “no unemployment impact” by moving the “high exposure” bar from the median all the way to the 95th percentile and cross-checking against unemployment insurance claims. The result: no clean unemployment signal yet, the trend stays essentially flat, even under more aggressive assumptions.

Leaders, here’s a question you should be asking: How are you redesigning entry-level roles, apprenticeship paths, and early career development in an AI-first workplace? Do your part in preserving the talent pipeline and explore where roles can be redesigned, augmented, with AI not automated by AI.

Please read Anthropic’s report to get the full story/analysis.

Read Mindshift | Subscribe to Brian’s Newsletter | Consider Brian as Your Next Speaker

What specific factors contribute to the slow adoption of AI technologies in businesses, despite their theoretical capabilities?